- March 30, 2019

- Posted by: Web Team

- Category: Uncategorized

Human Level AI is Artificial Intelligence that has reached the same level of intelligence as humans. This article does not talk about how we could achieve or invent Human Level AI, instead it deals briefly with the consequences of focusing on Human Level AI to the detriment of other endeavours.

Why do we care about Human Level AI?

Many of the concerns and thoughts about AI and its use to innovate are conflated with other AI issues. Human Level AI is on of those issues but it is often orthogonal to the main issues of using and engaging with AI.

Here are some sticky points associated with AI:

- How do we engage with it and use it to innovate and avoid disruption by other people who are innovating with it?

- What should we do about the possibilities of extreme automation and job losses caused by AI?

- What should we do about the concentration of resources, data and power by big AI companies (as there is a now concentration of wealth and resources in companies like Google, Facebook and Amazon)?

- How should we deal with the issue that Human Level AI might bring on utopias or dystopias?

It’s this last one that I will discuss here.

Why are people talking about Human Level AI?

People are increasingly talking about it for a few reasons:

- The commercial success of AI and machine learning systems, primarily Deep Learning, has got people excited about the commercial opportunities of creating powerful Human Level AI.

- Related to that, the commercial success of systems originating from AI research has lead to well funded commercial companies (such as DeepMind) whose primary aim is to develop Human Level AI. These well funded companies have more resources than traditional researchers, and are pushing the envelope more quickly.

- DeepMind* itself has had big successes – at least as far as the tech community is concerned – pitting its systems against human champions in Go and computer gaming, raising the profile of Human Level AI research.

- The rapid success of AI commercially has lead to a large movement of upper echelon academics (the ones who have made significant breakthroughs in their AI areas) to companies like Google, Facebook, Uber etc, and this has naturally lead to these companies being more interested in Human Level AI since the leaders of their AI programs (the famous AI professors) have often always been interested developing Human Level AI (which makes sense from their perspective).

- The promise of societal and not merely commercial big wins, as a result of developing Super Intelligence – like curing cancer, solving climate change and so on.

- The prediction that Human Level AI is near. This prediction comes primarily from Ray Kurzweil and is repeated regularly by the Singularity University luminaries (like Peter Diamandas). The prediction is that human exponential development of technology will bring Human Level AI into reality within the next 12 years and the technological singularity soon after.**

Other Names for it

There are other terms for it such as:

- AGI – Artificial General Intelligence.

- Universal Learners.

- Strong AI (as opposed to weak or narrow AI – which we have now – and which is very good at one particular thing like recognising cats or playing chess but poor at everything else).

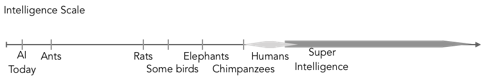

Sometimes the term Super Intelligence (see figure 1) is used, although it suggests greater than human level intelligence. Once a human level intelligence for machines is achieved, Super Intelligence doesn’t seem that far off, because it’s much easier to upgrade (or uplift) machines to higher levels of efficiencies than it is to upgrade living organisms such as humans. It is sometimes suggested that once Human Level AI has been reached, then very quickly (for example in the time it takes me to write this article) it will reach the level of Super Intelligence, although the pathways, mechanisms and (machine) incentives required to achieve this are usually glossed over.

Figure 1: Intelligence Scale. © John Shea 2019

From now on I’m going to use the term AGI – because it’s short and sweet.

When will it AGI be developed?

Martin Ford (2018) interviewed a bunch of AI luminaries (some mentioned above who are in charge of big AI efforts in big tech companies), they all had widely divergent theories as to when AGI will be invented ranging from 12 years to hundreds of years to never.

Currently AGI seems nowhere in sight. Like …. no where. There is no sign of it because (the luminaries all agree) there needs to be some significant breakthroughs to make it happen.

However I am of the opinion, through the magic of human ingenuity (unless the world gets smashed by climate change) that AGI will at some stage be developed, probably sooner (this century) rather than later.

Well, do we want it?

Human ingenuity seems to be much better at creating amazing tech that creates commercial outcomes, which to be fair does sometimes have an indirect improvement on human wellbeing. So we must ask is the development of such technology going to create direct or indirect improvement on “global wellbeing”, as Yoshua Bengio (via Ford 2018) so nicely puts it.

Downsides

Figure 2: Terminator 2 : Judgement Day. Despite the dystopian scene – a great movie.

t’s rather uncertain that AGI will on balance bring global wellbeing – there is a case to be made that AGI, when developed, could suddenly get sick of us and turn us into energy making machines or fertiliser (Figure 2 above). There are many smart ways to try and avoid this (largely diluted by short term profit motives being the preeminent decider of any actions taken in our society) but whether the likelihood of angry AGI is small or large, the consequences of angry AGI happening are pretty severe.

So the downside of achieving AGI is possible human extinction.

Stuart Russel via Ford (2018) said: The problem with that is that if we succeed in creating artificial intelligence and machines with those abilities, then unless their objectives happen to be perfectly aligned with those of humans, then we’ve created something that’s extremely intelligent, but with objectives that are different from ours. And then, if that AI is more intelligent than us, then it’s going to attain its objectives and we, probably, are not!

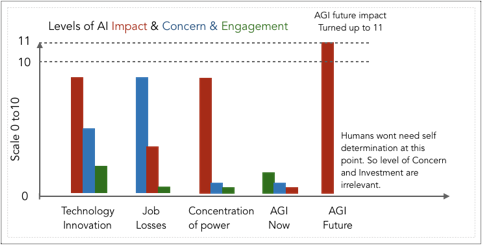

Figure 3: Levels of Impact, Concerns and Engagement with AI. © John Shea 2019

Andrew Ng has repeatedly said words to the effect of “I’ve said before that worrying about AGI evil killer robots today is like worrying about overpopulation on the planet Mars.”

Andrew is a super generous and amazing person, but I’m not sure this analogy makes much sense. Overpopulation on Mars is very unlikely to cause human extinction (or removal of self determination), in fact populations on Mars reduces the likelihood of human extinction.

Upsides…?

Often there does not seem to be a common set of goals in our global society to reduce suffering or optimise global wellbeing.

Instead goals seem to be primarily profit driven with occasional government interest to very slightly readdress the balance. The pursuit of AGI seems to me, to be more in the camp of “it would be cool and worth a lot of money”, with some rationalisation that AGI will eradicate disease, give us longer lives and fix climate change.

We don’t need to wait for AGI to fix those problems. Sure, AGI could help out, but it’s a pretty long road, and has a big downside risk.

Example of AGI target: Fix diseases & reduce suffering

When it comes to eradicating disease and longer lives, we know that these are the age related diseases that we pretty much get only when we get old:

- Heart Disease

- Cancer

- Stroke

- Emphysema

- Pneumonia

- Diabetes

- Kidney Disease

- Alzheimers and other dementia

If we didn’t get old we wouldn’t (for the vast majority of people) get these diseases.

So in this case it makes far more financial sense and well-being sense (if we cared about reducing suffering) to stop these diseases by improving our health-span or stopping ageing.

What are we doing to avoid getting old (apart from hoping for AGI)?

Pretty much nothing.

We spend huge on the symptoms of ageing (like cancer, alzheimers etc.) but not on the causes of those disease (getting old).

Another AGI target: Fix climate change?

What are we doing to prevent climate change?

Pretty much nothing.

Conversely, for the most part in this country, we are continuing doing our best to create more fossil fuels and eradicate the Great Barrier Reef.

Why am I going on about these off-topic issues? Because we seem to be way more interested in creating AGI, expending large resources on it because of the promise to solve all the problems we have, which we don’t seem that interested in actually directly solving.

It seems far more rational, rather than spending resources on creating AGI to solve our “big” problems, to focus and target these problems directly. Doing this now we could save a huge*** amount of time, effort and money and suffering, rather than hoping for a godlike (or devil like) AGI intervention in the future.

Summary

Human level Artificial Intelligence is part of the AI hype. It needs to be separated from the standard analysis of using AI to innovate. The pursuit of AGI in my view is risky and that it should be a lower priority than creating other technology that directly focuses on reducing suffering (be through ill health or climate change), or improving our global wellbeing.

Notes

* I’m not trying to paint DeepMind as the villain here, they’ve recently created a AI program called AlphaFold which predicts protein structure – a very important requirement for understanding and improving cell health therapies. I’m suggesting that for every DeepMind we should twenty other companies working directly on the problems that AGI is supposed to address.

** I’ve got mixed feelings on the suggested exponential rate of tech development. I tend to move between these three thoughts:

- (grumbling) “where is the singularity when you need it? My sports injuries are really annoying me and I want a pill to fix them.”

- “I’m pretty sure my bank balance is going to go exponential – its been flatlining for a long time – apparently that must mean it’s just about to hockey stick upwards!”, and

- I actually like being human – do I really have to become 1s an 0s?

*** I almost used the word “bigly” here, but thought it might backfire. Since no one reads the notes at the bottom of the page, there is no potential for humour backfiring.

References

- Bostrom, Nick (2014). SuperIntelligence: Paths, Dangers, Strategies.

- Ford, Martin (2018). Architects of Intelligence, The Truth about AI from the People Building it.

- https://en.wikipedia.org/wiki/Superintelligence

- https://en.wikipedia.org/wiki/AI_takeover