- January 30, 2020

- Posted by: Web Team

- Category: Uncategorized

Part of a photo by Mark Basarab on Unsplash

Aerial is my code name for an image analysis service or business which uses a machine learning module for object recognition and detection on images that are taken from a very low altitude such as from a drone. The business of Aerial would be a consultancy of some sort that explores customer needs, creates a media pipeline, probably trains a neural network for custom object detection, does the actual object recognition, and creates reports and visualisations for the client.

Part 3: Machine Learning Options

This is the third part of a four part series where I discuss how I might develop an Aerial Image Analysis Service (or Business) with machine learning. Its a little bit more technical than the first two, go straight to the conclusion if you aren’t that interested in the technical details. Also you can check it out on the demo page here. As explained in the article below it works ok with common animals (not African) and people and objects seen from the side.

The first part did some investigation into the desirability of such a service, the second part discusses the big picture feasibility, this part will concentrate on machine learning options and the final part of commercial viability.

One of the things I really love doing is prototyping something. It’s the aspect of discovery, the classic scientific method, theorise, hypothesise, experiment, discover. When it comes to prototyping the focus is to elucidate as quickly as possible the constraints and requirements that may help or restrict the creation of a real product or service. It is a discovery process, more science than engineering. After discovering whether value can be extracted from the prototypes, and if that value will be paid for (see next article) we can then approach the rest of product development from a more constrained engineering process.

On a side note, the culture of curiosity, exploration, discovery, creativity is one of the reasons why our small AI team at Altitude shift focuses on creating prototypes and proof of concepts (see here). Further, it is common that people want to test the waters in AI by seeing how they can create value with AI. The other approach is a data engineering approach where there is a data audit, the company’s data is amalgamated, cleaned and made available so as give maximum opportunities for future analytics and machine learning systems. This path is much more expensive. A lot of work has to be done before returns are seen. However many companies have extensive technical teams and are comfortable approaching engaging with data analytics and AI by building up an infrastructure that enables future AI and machine learning projects.

Open source

What off-the-shelf tech I could use for the machine learning part of the aerial service?

Whether the input is video or still images our initial goal is to do object detection. Object detection is different from object classification. Object classification tries to determine how to classify an image into a class like dog, cat or dolphin. Object detection identifies where an object (or multiple objects) is in an image and what it is.

There are a lot of open source models out there, most built using Convolutional Neural Networks (CNNs ) as a foundation. I chose to use the SSD network – Single Shot Multibox detector (eg check out this) with PyTorch.

The SSD model has many layers and commonly uses well known classifiers in some of the early layers, so I did a whole bunch of object detection tests using a pre-trained SSD and also some object classification tests with some of the same celebrated classifiers that are a part of the SDD architecture, such as ResNext, ResNet, AlexNet etc. They are famous because they have won Kaggle competitions or are based on models that have won Kaggle competitions.

The models I used are pre-trained because I did not want to spend weeks training them, but as you shall see even If I had decided to train from scratch I would have needed to change significantly neural network architecture.

Here are some of the results of the SSD. When isolating the images for the classifiers I got different but still poor results.

(See the end of the article for authors of the images I used from unsplash)

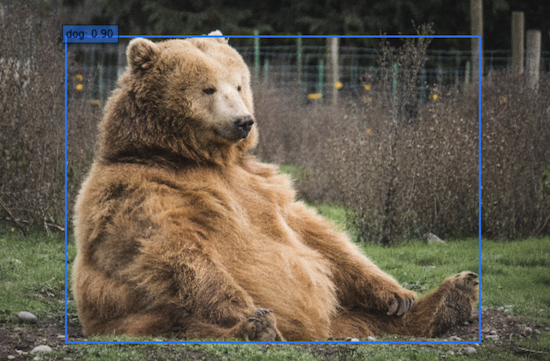

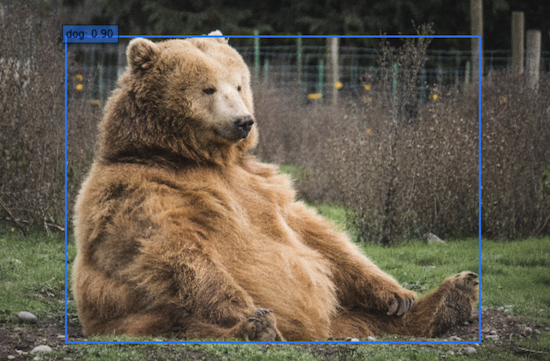

Fig 2: Looking good!

Fig 3: Oops

Fig 4 Oops again

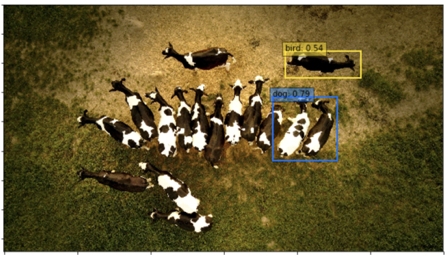

Fig 5: Yay! But I had to zoom it in – when the cow was smaller nothing was seen

Fig 6: Hmm I’m pretty sure elephants supposed to be able to be recognised by this model

Fig 7: Nothing : Probably those pesky shadows! Can we avoid them in real life ;-)?

Fig 8: Pegasi ?

Fig 9: Come on! The image is large and from the side – I made it easy for you!

Fig 10: I can’t see any bird like features

Fig 10: I can’t see any bird like features

Fig 11: Ditto

Fig 12: A cow bird and a double bodied cow-dog

Below is an example of just doing classification – not detection – of an image that I cropped massively.

Fig 13: Classified by various off the shelf classifiers as : chain, bolo tie, sturgeon, racket, loggerhead turtle, hammer head shark x2, kite

Finally three images where nothing was detected.

Figs 14: Nothing to see here, move along. Though for the many horse image above I did get some better results with AWS Rekognition it isolated the horses – but it did classify them as birds.

Keep in mind, this was a quick test, I just wanted to get a feel as to what was out there.

My conclusions are as follow:

- Most of these trained machine learning models have been trained on images of animals where the picture was taken from the side, not from above.

- The examples used to train probably weren’t very diverse, eg a dogs mistaken for bears and vice versa.

- There are a lot of image classes missing (eg dolphins).

- Even where you would expect that training images were taken from above (for example sharks), they still weren’t very good.

- Shadows likely destroy any chance of recognition

There is one other primary reason why these off the shelf models were never going to work and that is, for the model I used (and most Kaggle competition classifiers that I found) the images must be downscaled to 300 by 300 pixels (or a similar small size depending on the version of the SSD), so a hundred sharks in an image are going to be downscaled to nothing – and therefore be unrecognisable. I would have saved a few hours if I had eyeballed the SSD’s code first and realised the impossibility of image detection working on large images, but it was still fun to explore and find all the other ways that these models fail. For this reason you can see I cropped images to smaller sizes to see if the SSD object detection worked better.

If we want to analyse more realistic sized images, say 10-100 times larger, then the network architecture of the model will need to change, and we won’t be able to use pre-trained networks, we would have to train our own. Another option is to run the machine learning model over smaller parts of the image. However, it looks like we would need to do our own model training anyway since it appears that most pre-trained models haven’t been trained with the right sort of images.

Off the shelf – AWS

We didn’t really build something from nothing to do the tests above. The tools (Python, Pytorch), the architectures (ConvNets, SSD) and the trained model weights (ImageNet, ResNet etc) are available for free for us to easily code up something, but is there some sort of image detection service that we can just plug into?

Yes there are lots – but I’m only going to try with one of AWS machine learning service with the assumption that the other vendors will be more or less the same quality.

Of course the problem with using a third party complete solution is that it is harder to suddenly turn around and integrate a bunch of other sensory data like GPS, infrared etc. The vendors try to make their offerings flexible, but then they lock you into a particular architecture, and like any third party software service that can lead to a lot more work rather than a little.

AWS has a service called AWS Sagemaker which seems to use a very similar methodology and network for object detection that we used above (https://docs.aws.amazon.com/sagemaker/latest/dg/object-detection.html), that would probably be good if we wanted to train with new images, because we could easily scale, that is throw a lot of processing power at the training, but can we leverage something from AWS’s offerings which is prebuilt? Is there something that we could just drop some images into an online recogniser and see what happens?

Yes there is. AWS has a service called Rekognition which I test drove, and it performed similarly, perhaps very occasionally a little better than the SSD tested above. So for example sometimes it did a better job at distinguishing objects from the background (though with poor recognition eg manta rays were identified as people). For some images it performed worse (eg the elephants above were recognised as birds) it took a few elephants grouped together and classified them as a lizard. For most large images with small elements – eg pod of dolphins, it found nothing, but every now and then it would do something interesting like outlining 30 out of 40 horses from above – though it thought they were birds. Zooming in on these images sometimes got a result (eg one dolphin was a fish, but another two dolphins were identified as a person).

I was actually impressed with AWS Rekognition – it took no time to set up (though it did take a while to analyse – but hey it was the free demo) and at times seem to perform the actual object isolation better than the SSD above. However for our use cases I think it’s unlikely to be useful.

One final test

Just before publishing this I discovered a group at Facebook have an image detection system called Detectron2 (try it out using these instructions, don’t forget to select – in Google Colab – a GPU runtime) . It is described as:

“Detectron2 is Facebook AI Research’s next generation software system that implements state-of-the-art object detection algorithms”.

I found Detectron2 to be as poor as all the others at Aerial object detection.

Concluding & Going forward

(As mentioned above you can checkout this demo I made – as explained in the article above it works ok with common animals – eg not African – and people and objects seen from the side.)

Initially I thought that there would be a lot of open source models that I could just download and use. However some time learning about and testing these models brought me to the realisation that any good aerial detection is going to need i) its own set of appropriate images to train on, ii) the image size and the classification part of the deep learning model are going to need to be somewhat larger – even if we intend to break images up, and iii) we will need to architect new models and spend time and money teaching them how to recognise aerial images.

Keep in mind that we are prototyping here. This was my process (try something out!) to realise that some significant time and expertise needs to be spent on developing good object detection methods for the needs I explored in the previous blog pieces.

Of course there are ways to cheat (for example break up large images into smaller images), but the silver lining in all of this is that aerial image recognition seems (unlike some other recognition tasks) far from being commoditised. So creating and training new models will create differentiated IP, which should be valuable and difficult to copy without competitors spending the time and money going through the same image acquisition and machine model training. For example tracking animals or detecting other things in Australian landscapes is going probably require training the machine learning on images from Australian landscapes with sheep and other animals and plants in it (eg wombats, dogs, cows, goats, eucalypts, bunyips, yowies, drop bears etc)*. When we include other sensory readings (GPS, infrared, chromophotography ect), building and training your own machine models seems the most likely path to success. If we wanted to explore this aspect further, the next steps would be for us is to try larger networks, and get and/or create a lot of aerial images to test with.

The next blog piece will be on the viability of running an Aerial Image Analysis Service.

If you are interested in discussing this further, or you are interested in my services, please don’t hesitate to get in touch.

*Sheep generally seemed to be recognised quite easily – I cannot be sure if that is because they tend to be different from other animals, or the original training image sets had a lot of sheep photos.

Images authors:

unsplash: wenhao-ji

unsplash: andre-boysen

unsplash: mark-basarab

unsplash: lisa-h

unsplash: gemma-evans

unsplash: seen

unsplash: james-mcgill

unsplash: chirag-saini

unsplash: thomas-bonometti

unsplash: wynand-uys

unsplash: wynand-uys-NFH0X7S6xHA-unsplash.jpg

unsplash: zoe-reeve

unsplash: casey-allen

unsplash: harley-davidson

unsplash: chang-duong

unsplash: meric-dagli

unsplash: rene-deanda